Why Most “AI for Facilities” Is Still Just Expensive ChatGPT in a Hard Hat

95% of AI pilots in FM failed to deliver meaningful value in 2025. Here’s why 2026 won’t fix itself , and what separates tools that work from tools that just demo well.

Let’s open with the number the industry keeps burying in footnotes.

According to multiple 2025 reports across enterprise AI deployments, roughly 95% of generative AI pilots in operational and facilities contexts failed to deliver meaningful, scalable value. Not “underperformed slightly.” Failed. Stalled after the pilot. Got quietly deprioritised. Produced outputs that looked impressive in a boardroom presentation and were ignored by the people doing the actual work.

This is not an indictment of AI broadly. It is an indictment of how the facilities industry has been sold tools that were never designed for its actual operating conditions.

Before an AI system can do anything useful in a building, someone has to make the building’s data readable. In most real deployments, that process consumes 70 to 75 percent of the total project budget. Legacy BACnet controllers from 2003. Modbus devices that predate the smartphone. Sensor naming conventions that differ not just between buildings but between floors of the same building. Data gaps that nobody documented. Sensor drift that nobody flagged.

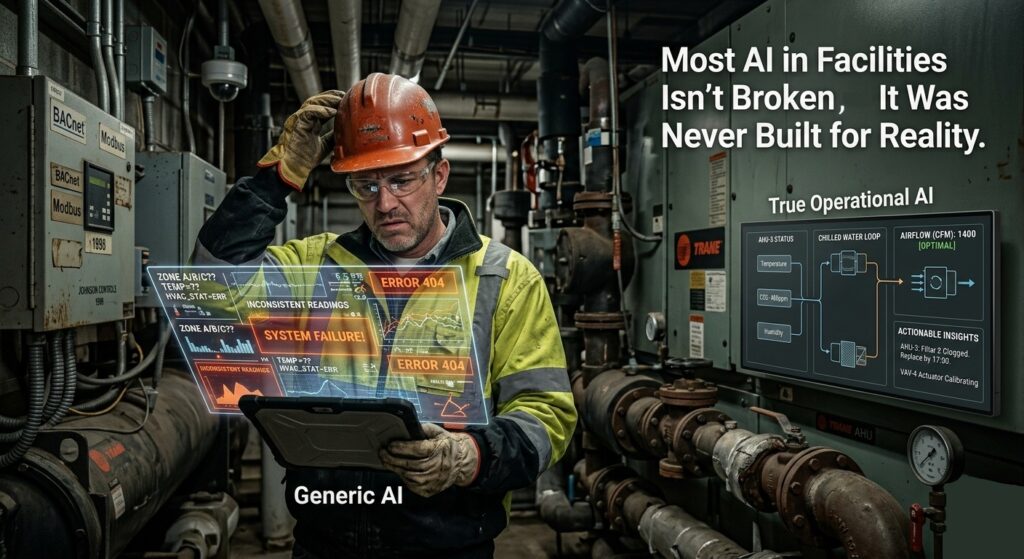

Generic AI lands on top of this environment and tries to make sense of it. Sometimes it does. More often, it generates confident, fluent, completely unreliable outputs from corrupted inputs and nobody downstream knows the difference until a recommendation gets acted on and makes things worse.

That is the starting point. Any tool that does not address it first is not a facilities AI platform. It is a dashboard with a chatbot glued to the front.

What Generic AI Tools Actually Do

To be direct: natural language interfaces for operational data are useful for a narrow set of tasks. Ad hoc reporting. Helping non-technical stakeholders pull figures without writing SQL. Giving an FM director a fast answer to “what was our average power draw last month.”

The ceiling arrives almost immediately.

Ask a generic AI system why CO2 levels in Zone 4 spiked last Tuesday and you will get an answer. It will be structured, confident, and built on statistical pattern matching across whatever data it can access. It will not know that CO2 rise combined with stable humidity and a simultaneous drop in supply air volume is a specific signature pointing to extract fan failure rather than occupancy. It has not been trained on that. It does not carry an embedded model of how HVAC, air handling, and occupancy systems interact physically.

The result is an answer that sounds authoritative and may be completely wrong. In low-stakes contexts, that is annoying. In facility management, where misdiagnosis drives missed maintenance windows, unplanned downtime, energy waste, and in some cases compliance failures, it is genuinely costly.

Why “Domain-Specific” Is Not Enough on Its Own

Here is the part that vendor marketing skips: calling a tool “purpose-built for FM” does not make it one. A significant portion of platforms wearing that label still fail in deployment for the same predictable reasons.

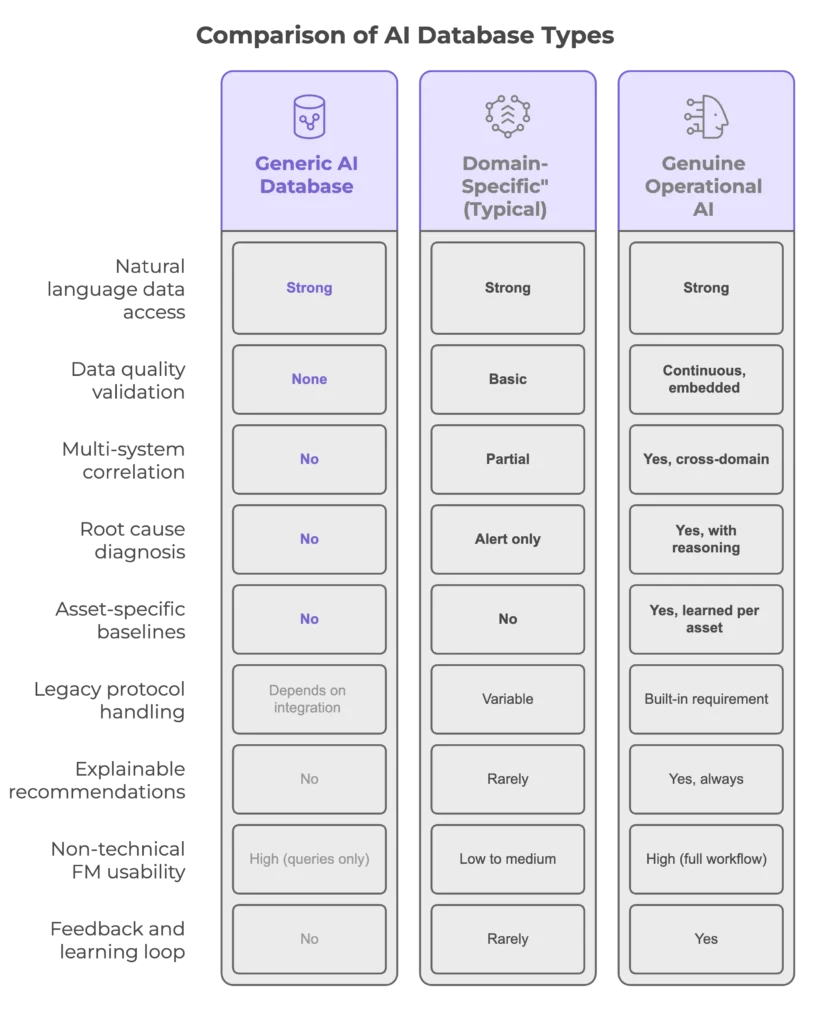

They are trained on clean data that does not exist in the field. Idealised sensor readings. Complete time series. Consistent asset labelling. Real buildings do not look like that, and models calibrated on lab-quality data perform poorly against operational reality.

Their outputs are black boxes. An alert saying “anomaly detected in AHU-3” is barely more useful than a threshold alarm from 2009. Without a causal explanation, a confidence level, and a recommended action with reasoning, the FM team has to investigate from scratch. Noisy, unexplained alerts get ignored. Systems that get ignored get switched off.

They apply universal baselines rather than learning asset-specific behaviour. Building A’s normal return air temperature profile is not Building B’s. A model that cannot distinguish between what is normal for a specific asset and what is genuinely anomalous will generate constant false positives. FM teams are already stretched thin. False positives are budget and attention they do not have.

They assume a level of technical capability that most FM teams do not have. The skills gap in facilities data analytics is not a minor inconvenience. More than 90% of FM organisations report lacking in-house capability to configure, maintain, or critically evaluate AI-generated outputs. A platform that requires a data scientist to interpret its recommendations is not an FM tool. It is a liability.

What a Real Operational AI Failure Looks Like

Here is a scenario drawn from the kind of deployment that does not make it into case studies because nobody wants to publicise it.

A large mixed-use commercial building runs a generic AI analytics layer over its BMS data. Over a period of four months, a chiller unit operates at degraded efficiency. The AI flags occasional anomalies but cannot connect declining performance metrics across condenser water temperature, chiller power draw, and supply chilled water temperature into a coherent diagnosis. Each flag gets reviewed individually and closed as within-range variation.

By the time a fault is identified through a scheduled maintenance visit, the chiller has been running at significantly reduced efficiency for 17 weeks. The combined cost: roughly 85,000 pounds in excess energy consumption, a missed window for a low-cost refrigerant top-up that becomes a full compressor replacement at four times the price, and a carbon reporting discrepancy that requires correction across two quarterly ESG submissions.

The AI was running the whole time. It just could not see what it was looking at.

A system capable of cross-referencing those three data streams against each other, applying domain knowledge about chiller performance degradation signatures, and surfacing a specific diagnosis with a recommended intervention would have flagged the issue within days of it developing. The cost difference is not marginal.

The Integration Problem Nobody Budgets For

This point deserves more than a paragraph because it is where most projects die.

Getting legacy building systems to produce AI-legible data is not a configuration task. It is an engineering project. Old BACnet controllers communicate in ways that modern data pipelines were not designed to receive. Protocol translation layers introduce latency and data loss. Naming normalisation across a multi-site portfolio can take months. And once the data is flowing, continuous validation is required because sensor drift, communication failures, and BMS updates can silently corrupt the feed at any time.

Any vendor that does not address this in detail during the sales conversation is either unaware of it or hoping you are. Ask specifically: what does your onboarding process look like for a building with a 2005-era BMS running mixed protocols and incomplete metadata? The answer will tell you a great deal about whether the tool was built for the real world or for a clean-data demo environment.

What the Comparison Actually Looks Like

The distance between column two and column three is where most “domain-specific” marketing lives. The distance between column two and column four is what actually changes operational outcomes.

Agentic AI Is Coming. Most Teams Are Not Ready.

The next meaningful shift in operational AI is already visible. Agentic systems, tools that do not just recommend but act, are moving from research into early deployment. In facilities, that means AI that can adjust setpoints, raise work orders in a CMMS, or escalate through the correct channels without waiting for human sign-off at every step.

The technology is arriving faster than the governance frameworks required to deploy it responsibly. Before an FM team can trust an agentic system to act autonomously on a building, they need confidence in three things: the quality of the data feeding it, the accuracy of the diagnostic layer interpreting it, and the auditability of the actions it takes. Most organisations currently have none of these in place.

The teams that will extract genuine value from agentic AI over the next three years are the ones building that foundation now. Not piloting another chatbot. Building the data infrastructure, the domain-trained reasoning layer, and the workflow integration that make autonomous action safe enough to trust.

An Evaluation Checklist That Actually Has Teeth

Does it validate data quality continuously, before and during analysis? Can it explain the cause of an anomaly in terms a maintenance engineer can act on? Does it correlate across HVAC, electrical, air quality, and occupancy data simultaneously? How does it perform on a real building with legacy protocols and incomplete sensor metadata? Can FM staff use it without a data analyst in the loop? Does it learn the specific operational profile of individual assets? Can it quantify the energy cost and carbon impact of identified faults? What does the implementation process look like for a portfolio with fragmented BMS infrastructure? What happens when its recommendation is wrong and the FM team knows it?

If a vendor struggles with questions seven, eight, and nine, that is your answer.

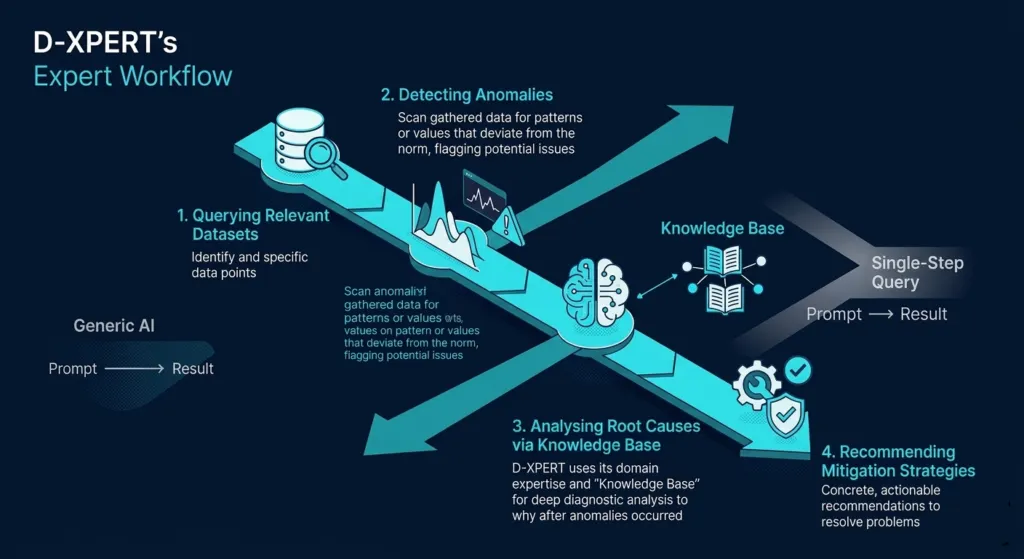

Why D-XPERT Exists

D-XPERT was built specifically to operate in the conditions described above. Not clean pilot environments. Actual buildings with legacy systems, partial datasets, sensor drift, and FM teams who do not have time to babysit an AI.

Its diagnostic layer does not flag anomalies. It identifies causes, connecting environmental sensor patterns to specific physical or equipment faults and surfacing recommendations with transparent reasoning that an FM team can evaluate and act on directly. Its data validation is continuous, not a one-time onboarding step. Its baselines are asset-specific, not universal. And it is designed to be used by the people running the building, not by the data team who set it up.

If your portfolio is running on legacy infrastructure, generating noisy alerts that nobody trusts, and watching AI pilots get deprioritised after quarter two, the problem is not that AI does not work in facilities management. The problem is that the tools being sold as FM AI were never built for it.

There is a difference. It is worth understanding before the next procurement cycle.